Device-Directed Rendering

The Main Idea

When we produce a rendered image, we don’t usually think about the output device. Instead, we focus on doing good physics and computer science when we render the scene, and then we hand the resulting grid of colors to a device for display. Unfortunately, the range of colors that any given device can show is usually limited. This range, called the device’s color gamut, or simply gamut, varies from one device to another. Printers, monitors, smartphone screens, every kind of display and every model of display has its own idiosyncratic gamut.

When a device is asked to display a color that it can’t show it usually shows the closest color available. This usually means just clipping the RGB values in a file to the range (0,255) and hoping for the best. It’s hard to think of a better strategy for the display device, but this technique can really ruin an image. For example, suppose you want to show an object that has a bright sky-blue color, so one of the pixels that shows the object has RGB value (100, 400, 260). The device would show (100, 255, 255). But this is aqua, and not at all the color you wanted.

This effect causes all kinds of problems. In addition to this color shift, it ruins gradients, because instead of getting nice smoothly-changing colors you suddenly get a sharp edge and then a wall of solid color. And if you’re rendering a 3D scene, this can cause a really bad problem we call the failure of semantic integrity. Suppose you’re rendering an object in the sky-blue color above, and it’s reflected in a dark mirror. The reflection would be in gamut, so you’d see a nicely shaded dark sky-blue in the mirror. But the object itself is out of gamut, so that object would appear as a flat, unshaded, bright aqua. The reflection doesn’t match the object that’s being reflected, and the picture doesn’t make sense any more.

Our idea was to modify our rendering algorithm so that the image it produced would fit into a specific device’s gamut without any distortion. To do this, we modify the colors of the scene’s lights and objects and re-render it, creating a new iamge that can be displayed without distortion. This solves all the problems described above, such as color shifting, ruined gradients, and loss of semantic integrity.

Here’s the idea. On the left we have a little rendered image, which we call the “target”. Lots of pixels are out of gamut, and so in this image a lot of pixels are clipped and distorted. You can see this by looking at the cylinders: rather than seeing a smooth gradient reflecting the changing shading on each cylinder, there’s just a big vertical bar of solid color. The shading was there in the file, but the clipped pixels lose it.

Here’s the idea. On the left we have a little rendered image, which we call the “target”. Lots of pixels are out of gamut, and so in this image a lot of pixels are clipped and distorted. You can see this by looking at the cylinders: rather than seeing a smooth gradient reflecting the changing shading on each cylinder, there’s just a big vertical bar of solid color. The shading was there in the file, but the clipped pixels lose it.

When we first render a picture, we save the color expressions for the entire tree of operations that ultimately define the color of a pixel. These expressions are just big vector-valued polynomials. So we can think of the target image as some ideal image projected into the multidimensional space of allowed images. We set up a huge matrix of partial derivatives of these polynomials with respect to each color, and invert it. Now we use this matrix to move each color from the original scene a little bit towards the target, and re-render. We evaluate how far we are, and repeat. Eventually we produce a new set of scene colors (that is, the colors of lights, materials, participating media, and so on) such that the image we produce fits entirely within the target device’s gamut. The resulting image is shown on the right. These cylinders are darker, but smoothly shaded. Note that we aren’t doing a local operation on the image file or its pixels; instead, the colors in the original scene description were changed and the image re-rendered so that it fit into the gamut for this device.

Here’s another example. The golden ball is sitting in a little two-sided box with a blue floor, and mirrors for walls. The target device for this image had a smaller gamut than the one above, so it was unable to produce very bright colors. In the target on the left, the highlights on the ball go out of gamut and turn into solid regions of yellow. The floor is also a little too bright and is getting clipped. In the final image on the right, everything is smooth and in gamut.

Here’s another example. The golden ball is sitting in a little two-sided box with a blue floor, and mirrors for walls. The target device for this image had a smaller gamut than the one above, so it was unable to produce very bright colors. In the target on the left, the highlights on the ball go out of gamut and turn into solid regions of yellow. The floor is also a little too bright and is getting clipped. In the final image on the right, everything is smooth and in gamut.

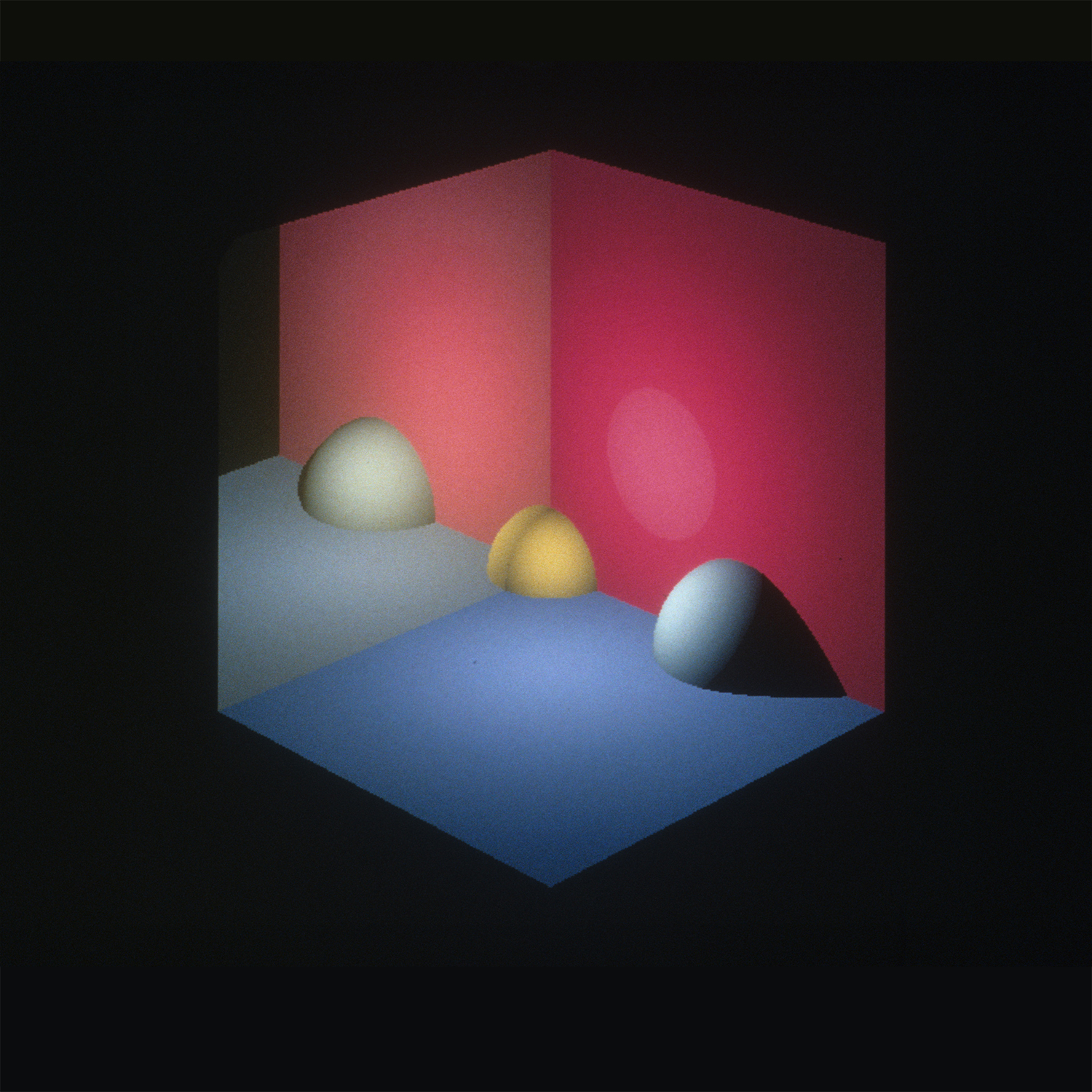

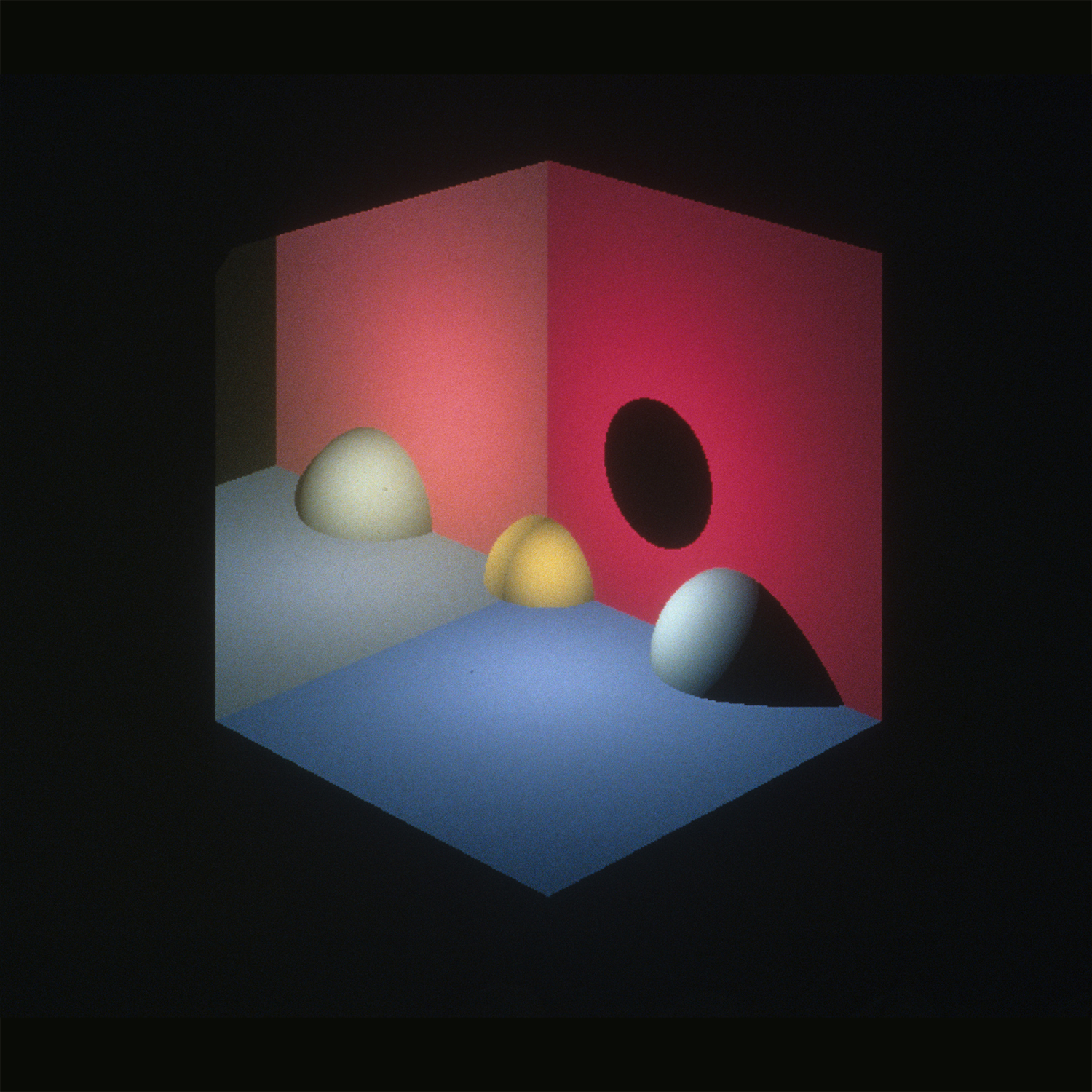

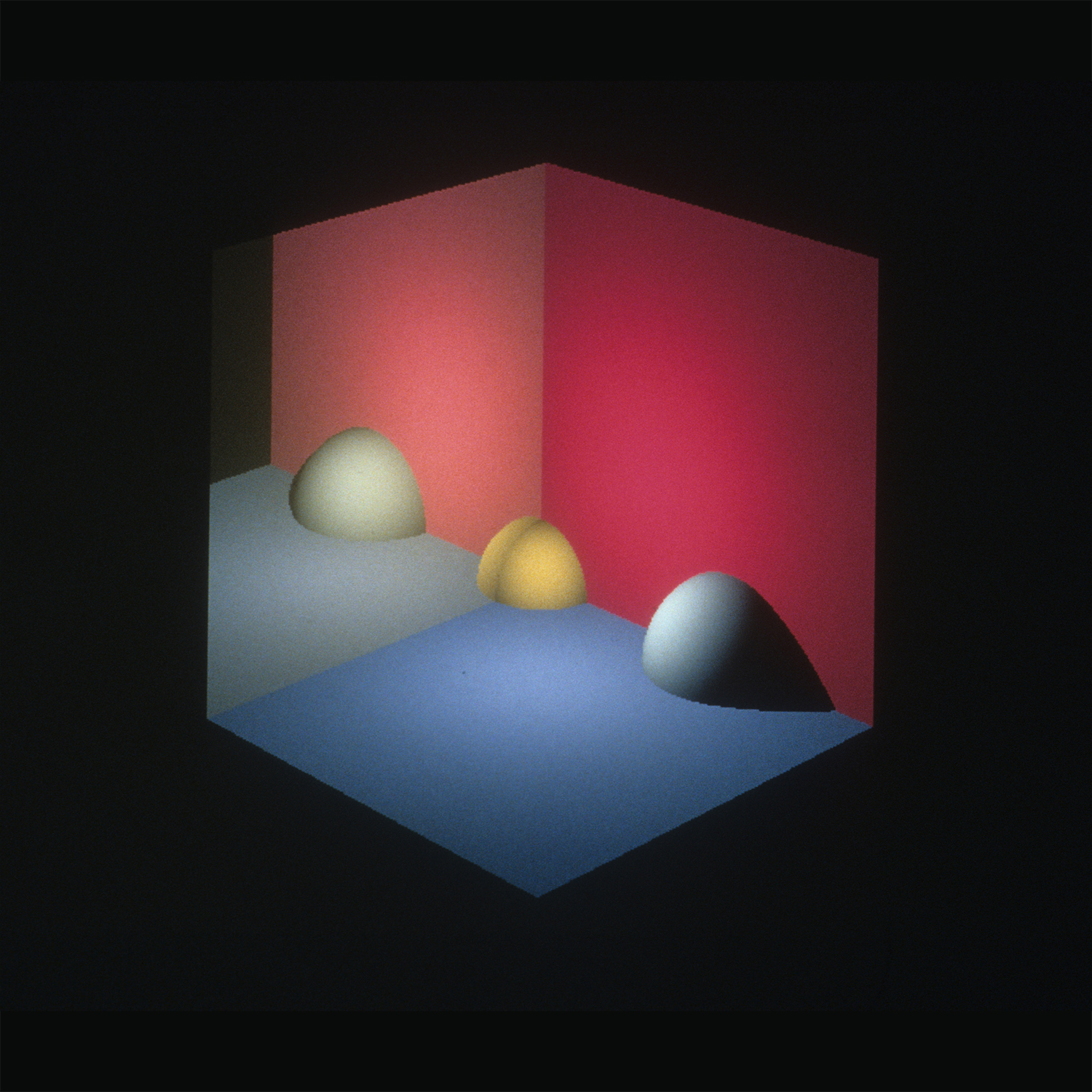

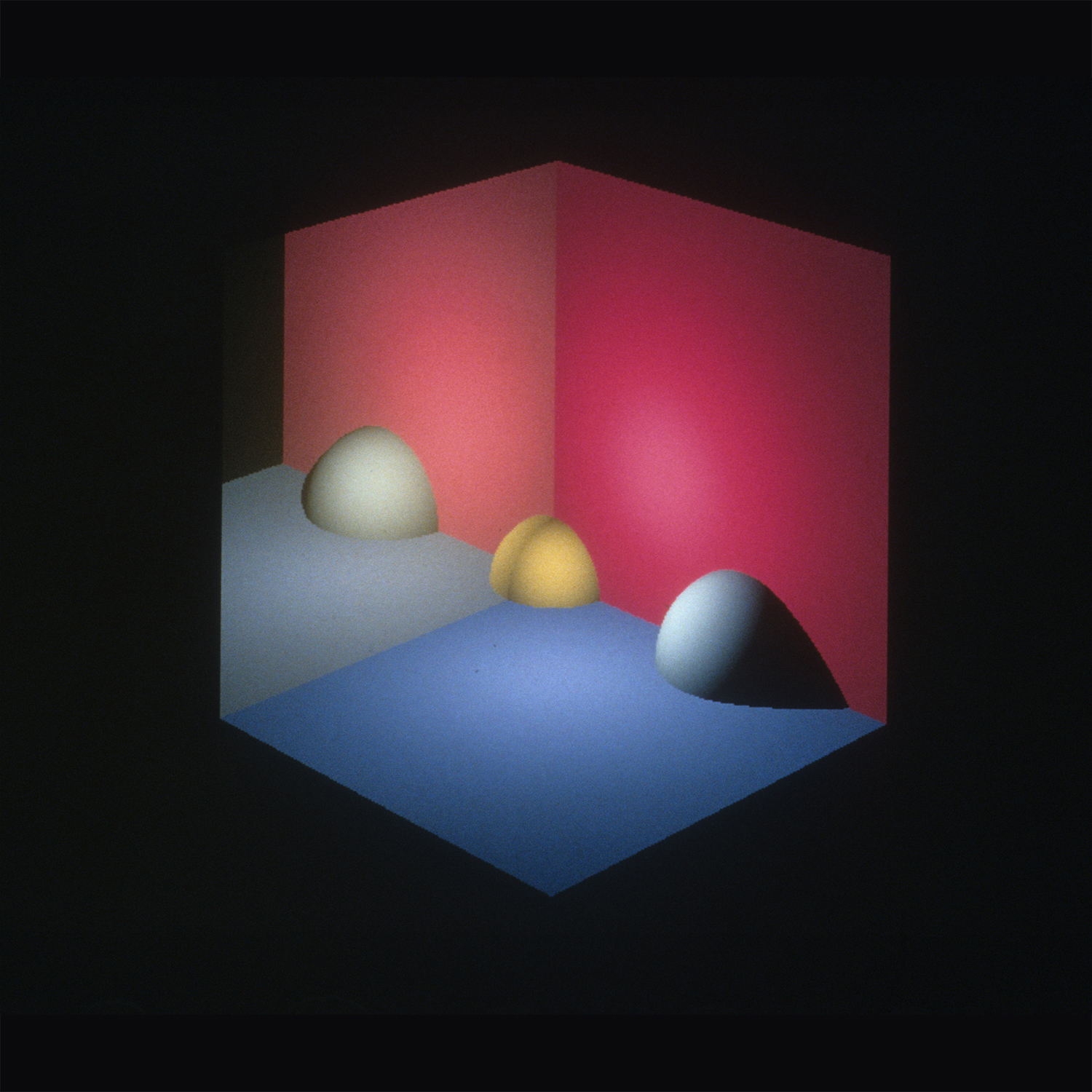

Here’s a final example that shows all the steps. In this scene, a small golden ball is sitting in the corner of a two-sided box. The floor is matte blue, one wall is matte red, and the other wall is a slightly yellow mirror. There’s also a larger white ball sitting between the floor and red wall.

On the left we see the original image, clipped to the output gamut. Notice how the highlight on the red wall is not smoothly shaded, but instead clamps at a bright, light red. But because the mirror is a little bit yellow and a little dark, the reflection of this highlight is within gamut, and doesn’t have that flat region of clamped color. Thus the reflection doesn’t match the thing being reflected, and the picture just doesn’t make sense any more. The next picture to the right shows the out-of-gamut colors by simply not drawing them. This leaves a big black hole on the right wall. One way to bring the picture into gamut is to re-render it by simply making the light source in the scene darker. The third image shows that approach, but now we’ve lost the highlight on the red wall. The picture on the far right shows the result of applying Device-Directed Rendering. The colors have all moved around a very little bit, resulting in an image very close to our original, but entirely within gamut, and therefore can be displayed without compromise.

This was a joint research project with my colleagues Ken Fishkin, David Marimont, and Maureen Stone at Xerox PARC.

References

Glassner, Andrew S., Kenneth P. Fishkin, David H. Marimont, and Maureen C. Stone, “Device-Directed Rendering”, ACM Transactions on Graphics, Vol. 14, No. 3, January 1995, pp. 58-76

Leave a Reply

You must be logged in to post a comment.

Leave a Reply

You must be logged in to post a comment.